A queue and a log are different things. SQS is a queue. Kafka is a log. Most "SQS vs Kafka" debates are people arguing over which is the better screwdriver when one of them is a hammer.

Every backend team eventually faces this decision. You need asynchronous messaging. Something to decouple services, handle background work, or stream events between systems. You Google "SQS vs Kafka," find twenty articles that list features side by side, and walk away no closer to a decision.

This article won't rehash "what is SQS" or "what is Kafka." It covers when each one breaks, the real trade-offs you'll hit in production, and how to make the right call for your specific system. Including the option most articles ignore: using both.

SQS and Kafka aren't interchangeable — SQS is a managed message queue, Kafka is a distributed event streaming platform. Pick SQS for fire-and-forget background jobs, decoupled microservice tasks, and anything where messages are consumed once and discarded. Pick Kafka when you need event replay, multiple consumer groups reading the same stream, ordering at scale, or analytics pipelines. Most production systems eventually need both — SQS for work queues, Kafka for the event log.

The Real Problem: Why Teams Get This Wrong

The messaging system choice goes wrong in three predictable ways.

Overengineering with Kafka. A team building a CRUD app with 500 requests/minute deploys a three-broker Kafka cluster because "we might need event replay someday." Six months later, they've spent more time managing Kafka than building features. The replay capability has never been used. They could have shipped the same system with SQS in an afternoon.

Underestimating SQS limitations. A team picks SQS because it's simple. The system grows. Now they need multiple consumers processing the same events. They need to replay messages after a failed deployment. They need strict ordering across millions of messages. SQS can't do any of this well, and the migration to Kafka mid-growth is painful.

Choosing based on hype instead of requirements. Kafka has massive mindshare. It's what FAANG companies use. So teams adopt it without asking whether their 200-message-per-second workload justifies a distributed commit log. The answer is almost always no.

The right question isn't "which is better?" It's: what does your system actually need today, and what will it need in 12 months?

The Mental Model: Two Different Tools for Two Different Problems

Before comparing features, get the fundamental distinction right.

SQS is about decoupling. Service A puts a message in a queue. Service B picks it up and processes it. The message is consumed once and deleted. SQS is a buffer between producers and consumers. That's it.

Kafka is about event streams and replayability. Service A writes an event to a topic. Services B, C, and D can each read that event independently, at their own pace, and can re-read it days later. Kafka is a distributed, append-only log that retains events. It's closer to a database than a queue.

SQS Mental Model:

Producer → [Queue] → Consumer (message deleted after processing)

Kafka Mental Model:

Producer → [Append-Only Log] → Consumer A (offset 42)

→ Consumer B (offset 38)

→ Consumer C (offset 50)

→ (events retained for days/weeks/forever)This distinction drives every trade-off that follows. If you need a task queue - fire and forget, process once, move on - SQS is the right tool. If you need an event stream - multiple consumers, replay capability, event sourcing - Kafka is the right tool. Most confusion comes from trying to use one where the other belongs.

Core Differences That Actually Matter in Production

Feature comparisons are everywhere. Most of them are useless because they don't explain why the difference matters. Here are the five differences that will actually affect your system.

1. Message Retention and Replay

SQS: Messages are deleted after a consumer successfully processes them. Default retention is 4 days, maximum 14 days. Once deleted, gone forever. There's no way to "replay" processed messages.

Kafka: Events are retained based on configurable policies - by time (e.g., 7 days, 30 days, forever) or by size. Consumers track their position (offset) in the log. They can rewind to any past offset and reprocess events.

Why this matters:

A bad deployment corrupts data for 10,000 users. With Kafka, you fix the bug, reset the consumer offset to before the deployment, and reprocess the events. The data is still there. With SQS, those messages are gone. You're writing a one-off migration script against whatever data you can piece together from logs and database snapshots.

Kafka replay scenario:

Day 1: Consumer processes events 1-50,000 ✓

Day 2: Deploy buggy code, events 50,001-55,000 processed incorrectly ✗

Day 3: Fix bug, reset consumer offset to 50,001

Day 3: Reprocess events 50,001-55,000 correctly ✓

SQS equivalent scenario:

Day 1: Consumer processes messages 1-50,000 (messages deleted) ✓

Day 2: Deploy buggy code, messages 50,001-55,000 processed incorrectly ✗

Day 3: Fix bug... messages are gone. Write manual recovery script. Pray.The insight most people miss: Kafka can act as a source of truth for events. SQS cannot. If your system needs an audit trail, event replay, or the ability to rebuild state from a stream of events, SQS is the wrong tool regardless of any other consideration.

2. Ordering Guarantees

SQS Standard: No ordering guarantee. Messages can arrive out of order. At-least-once delivery - duplicates are possible.

SQS FIFO: Strict ordering within a "message group." But throughput is capped at 300 messages/second per message group (3,000 with batching). You can have multiple message groups, but ordering is only guaranteed within each group.

Kafka: Ordering is guaranteed within a partition. A topic can have hundreds or thousands of partitions, each maintaining strict order independently. Total throughput scales linearly with partition count.

# SQS FIFO - ordering per message group

import boto3

sqs = boto3.client('sqs')

# These three messages are guaranteed to arrive in order

# because they share a MessageGroupId

sqs.send_message(

QueueUrl=fifo_queue_url,

MessageBody='{"order_id": 123, "status": "created"}',

MessageGroupId='order-123', # Ordering key

MessageDeduplicationId='evt-001' # Required for FIFO

)

sqs.send_message(

QueueUrl=fifo_queue_url,

MessageBody='{"order_id": 123, "status": "paid"}',

MessageGroupId='order-123',

MessageDeduplicationId='evt-002'

)

sqs.send_message(

QueueUrl=fifo_queue_url,

MessageBody='{"order_id": 123, "status": "shipped"}',

MessageGroupId='order-123',

MessageDeduplicationId='evt-003'

)# Kafka - ordering per partition via key-based routing

from kafka import KafkaProducer

import json

producer = KafkaProducer(

bootstrap_servers='kafka:9092',

value_serializer=lambda v: json.dumps(v).encode()

)

# All messages with the same key go to the same partition → ordered

producer.send('orders', key=b'order-123', value={"order_id": 123, "status": "created"})

producer.send('orders', key=b'order-123', value={"order_id": 123, "status": "paid"})

producer.send('orders', key=b'order-123', value={"order_id": 123, "status": "shipped"})

# Different order → different partition → independent ordering

producer.send('orders', key=b'order-456', value={"order_id": 456, "status": "created"})Where SQS FIFO hits its ceiling:

300 messages/second per message group is fine for most order-processing systems. But if you're processing 50,000 events/second across millions of entities - user activity streams, IoT telemetry, clickstream data - you'll exhaust SQS FIFO's throughput. You'd need to shard across thousands of queues, which defeats the simplicity advantage.

Where Kafka shines: A single topic with 100 partitions can handle hundreds of thousands of messages/second with per-key ordering. Scaling is adding partitions, not managing separate queues.

3. Throughput and Scaling

SQS Standard: Auto-scales transparently. AWS handles all capacity management. Practically unlimited throughput - AWS advertises "nearly unlimited" transactions per second. You never think about scaling SQS. This is its biggest operational advantage.

SQS FIFO: Capped. 300 messages/second per message group, 3,000 with batching. 20,000 messages/second per queue (with high-throughput mode). These limits are hard walls, not soft guidelines.

Kafka: Throughput scales with partitions and brokers. A well-configured cluster can handle millions of messages per second. But "well-configured" is doing heavy lifting in that sentence.

Real numbers from production systems:

| Metric | SQS Standard | SQS FIFO | Kafka (3 brokers) | Kafka (10 brokers) |

|---|---|---|---|---|

| Messages/second | ~unlimited | ~3,000-20,000 | ~200,000 | ~1,000,000+ |

| Latency (p50) | 1-10ms | 5-20ms | 2-5ms | 2-5ms |

| Latency (p99) | 20-100ms | 50-200ms | 10-50ms | 10-50ms |

| Scaling effort | Zero | Zero | Manual/planned | Manual/planned |

The catch with Kafka throughput: Those numbers assume proper partitioning, tuned batch.size and linger.ms, sufficient replication factor, and correctly sized brokers. Out of the box, a default Kafka deployment won't hit anywhere near those numbers. You're paying for throughput with operational knowledge.

# Kafka producer tuning for high throughput

batch.size=65536 # 64KB batches (default 16KB)

linger.ms=10 # Wait up to 10ms to fill batch

compression.type=lz4 # Compress batches - huge throughput gain

buffer.memory=134217728 # 128MB producer buffer

acks=1 # Leader ack only (trade durability for speed)

# Kafka broker tuning

num.io.threads=8 # Match to CPU cores

num.network.threads=3

socket.send.buffer.bytes=1048576 # 1MB send buffer

socket.receive.buffer.bytes=1048576

log.flush.interval.messages=10000 # Flush to disk every 10K messagesMy take: If your throughput needs are under 10,000 messages/second, SQS Standard handles it without thinking. Between 10,000 and 100,000, either tool works but Kafka gives you more headroom. Above 100,000, Kafka is the only realistic option unless you're willing to manage hundreds of SQS queues.

4. Consumer Model

This is where the architectural difference between SQS and Kafka becomes most visible.

SQS - Pull and Delete:

A consumer polls the queue, receives a message, processes it, then deletes it. If the consumer crashes before deleting, the message reappears after the visibility timeout. Each message is processed by exactly one consumer (in standard mode, at least once with possible duplicates).

# SQS consumer pattern

import boto3

import json

sqs = boto3.client('sqs')

while True:

response = sqs.receive_message(

QueueUrl=queue_url,

MaxNumberOfMessages=10, # Batch up to 10

WaitTimeSeconds=20, # Long polling - reduces empty receives

VisibilityTimeout=300 # 5 minutes to process before redelivery

)

for message in response.get('Messages', []):

try:

body = json.loads(message['Body'])

process(body)

# Success - delete message permanently

sqs.delete_message(

QueueUrl=queue_url,

ReceiptHandle=message['ReceiptHandle']

)

except Exception as e:

# Don't delete - message will reappear after visibility timeout

log.error(f"Failed to process: {e}")Kafka - Offset-Based Consumption:

Consumers track their position (offset) in the partition log. They read events sequentially, advance their offset, and can rewind at any time. Multiple consumer groups can read the same topic independently - each maintains its own offset.

# Kafka consumer pattern

from kafka import KafkaConsumer

import json

consumer = KafkaConsumer(

'orders',

bootstrap_servers='kafka:9092',

group_id='order-processor', # Consumer group

auto_offset_reset='earliest', # Start from beginning if no offset

enable_auto_commit=False, # Manual offset management

value_deserializer=lambda v: json.loads(v.decode())

)

for message in consumer:

try:

process(message.value)

# Commit offset - marks this message as processed

consumer.commit()

except Exception as e:

log.error(f"Failed to process: {e}")

# Don't commit - next poll returns the same message

break # Or implement retry logicThe critical difference - multiple consumers:

With SQS, a message is consumed by one consumer. If three services need the same event, you need SNS fan-out to three separate SQS queues. Each queue is an independent copy.

SQS fan-out pattern:

Producer → SNS Topic → SQS Queue A → Service A

→ SQS Queue B → Service B

→ SQS Queue C → Service C

(Three copies of every message. Three queues to manage.)With Kafka, multiple consumer groups read the same topic independently. No duplication. No fan-out infrastructure. The data exists once.

Kafka multi-consumer pattern:

Producer → Kafka Topic → Consumer Group A (Service A, offset: 50,000)

→ Consumer Group B (Service B, offset: 48,000)

→ Consumer Group C (Service C, offset: 50,000)

(One copy of data. Each service reads at its own pace.)This is Kafka's killer feature. When you have an event that three, five, or ten services need to react to - order placed, user signed up, payment completed - Kafka handles it naturally. With SQS, you're building and maintaining SNS fan-out infrastructure, multiplying storage costs, and managing multiple queues.

5. Operational Complexity

This is where SQS wins decisively for small and mid-sized teams.

SQS: Create a queue. Send messages. Receive messages. That's it. No servers to manage, no brokers to tune, no partitions to rebalance, no ZooKeeper or KRaft to babysit. AWS handles scaling, replication, availability, and monitoring. The operational cost is effectively zero.

Kafka (self-managed): You're running a distributed system. Broker configuration, partition rebalancing, ISR (in-sync replica) management, disk capacity planning, JVM tuning, monitoring lag per consumer group, handling broker failures, upgrading without downtime. A production Kafka cluster needs a dedicated team - or at least one engineer who understands it deeply.

Kafka (managed - AWS MSK, Confluent Cloud): Significantly less ops than self-managed, but not zero. You still deal with partition strategy, consumer group management, schema evolution, and capacity planning. The broker infrastructure is managed, but the Kafka-specific operational knowledge is still on you.

Real operational costs people don't mention:

| Operational Task | SQS | Kafka (Self-Managed) | Kafka (MSK/Confluent) |

|---|---|---|---|

| Initial setup | 5 minutes | 2-5 days | 2-4 hours |

| Scaling | Automatic | Manual partition/broker changes | Semi-automatic |

| Monitoring | CloudWatch (built-in) | Prometheus/Grafana + custom dashboards | Partially built-in |

| Upgrades | Invisible (AWS handles it) | Rolling restart, careful planning | Managed, but needs scheduling |

| Failure recovery | Automatic | Manual intervention likely | Mostly automatic |

| Team knowledge needed | Basic AWS | Deep Kafka + distributed systems expertise | Moderate Kafka knowledge |

The honest truth: If your team doesn't have Kafka expertise and you don't have a compelling reason for event replay or multi-consumer patterns, choosing Kafka is choosing to spend engineering time on infrastructure instead of product.

The Full Trade-Off Comparison

| Factor | SQS Standard | SQS FIFO | Kafka |

|---|---|---|---|

| Setup complexity | Trivial | Trivial | Significant |

| Operational burden | Near zero | Near zero | High (self-managed) / Medium (managed) |

| Throughput | Virtually unlimited | Capped (~20K/s) | Millions/second (tuned) |

| Ordering | None guaranteed | Per message group | Per partition |

| Message replay | Not possible | Not possible | Core feature |

| Retention | Max 14 days | Max 14 days | Configurable (unlimited) |

| Multi-consumer | SNS fan-out required | SNS fan-out required | Native (consumer groups) |

| Cost model | Per-request (cheap at low volume) | Per-request (2x standard price) | Infrastructure (expensive at low volume) |

| Delivery guarantee | At-least-once | Exactly-once (within queue) | At-least-once (exactly-once with config) |

| Dead letter queue | Built-in | Built-in | Manual implementation |

| Best mental model | Task queue / mailbox | Ordered task queue | Distributed event log |

Where Each One Breaks - The Section That Matters

Where SQS Breaks

1. You need event replay.

A deployment introduces a bug that corrupts data for events processed in the last 6 hours. With SQS, those messages are deleted. Your recovery options are database backups, application logs, or manual fixes. There is no "reprocess from 6 hours ago."

If your system processes events where a bad deployment could require reprocessing, SQS is fundamentally the wrong tool. This isn't a limitation you can work around - it's a design decision baked into SQS's architecture.

2. Multiple services need the same event.

You can work around this with SNS fan-out, but it's a kludge at scale. Every new consumer means a new SQS queue, a new SNS subscription, and another copy of every message. At 10 consumers processing 50,000 events/day, you're storing and delivering 500,000 messages daily instead of 50,000.

# SQS + SNS fan-out cost math at scale

Events per day: 1,000,000

Consumers: 8

SQS approach: 8 queues × 1,000,000 messages = 8,000,000 SQS requests/day

+ 1,000,000 SNS publishes + 8,000,000 SNS deliveries

Kafka approach: 1,000,000 messages written once

8 consumer groups read independently

Total messages stored: 1,000,0003. You need strict ordering at high throughput.

SQS FIFO's 300/second per message group cap is a hard wall. If you need ordered processing of 100,000 events/second partitioned by user ID, you'd need to shard across custom logic and potentially hundreds of FIFO queues. At that point, you've built a worse version of Kafka's partitioning model.

4. You need long-term event storage.

SQS retains messages for maximum 14 days. If you need a 90-day audit trail or the ability to rebuild a service's state from the event stream, SQS can't help. You'd need to archive messages to S3 or a database separately - which means building event storage infrastructure that Kafka provides out of the box.

5. Consumer processing is slow or variable.

SQS visibility timeout defines how long a consumer has to process a message before it's redelivered. If processing takes longer than expected, the message reappears and gets processed twice. You can extend the timeout, but you're guessing. With variable processing times, you'll either set it too short (duplicates) or too long (slow retry on failure).

Where Kafka Breaks

1. Your team lacks distributed systems expertise.

Kafka looks simple in tutorials. In production, you'll deal with consumer rebalancing storms, stuck consumers, partition skew, broker disk full scenarios, offset management bugs, and schema evolution headaches. If nobody on your team has operated Kafka before, budget 2-3 months of learning curve before it's production-stable.

Symptoms of a team not ready for Kafka:

- Consumer lag growing and nobody knows why

- Partition count chosen arbitrarily (12 because "it felt right")

- No schema registry - producers change message formats and consumers break

- No monitoring for consumer group lag

- Manual offset management with no dead letter strategy

2. The system is low-scale.

Running Kafka for 500 messages/minute is like driving a semi truck to pick up groceries. MSK's minimum cost is roughly $200-400/month for a small cluster. SQS for the same workload costs pennies.

Cost comparison - 500 messages/minute:

SQS Standard:

500 × 60 × 24 × 30 = 21,600,000 requests/month

Cost: ~$8.64/month (at $0.40 per million requests)

Kafka (MSK, smallest cluster):

3 × kafka.t3.small brokers

Cost: ~$200-400/month + storage

Kafka is 25-50x more expensive at this scale.3. You need simple task queuing with dead letter handling.

Kafka doesn't have a built-in dead letter queue. You need to build one - a separate topic where failed messages are published, with retry logic, backoff, and monitoring. SQS gives you this out of the box with a few lines of configuration.

# SQS dead letter queue - built-in, zero code

# Just configure the redrive policy on the source queue:

{

"RedrivePolicy": {

"deadLetterTargetArn": "arn:aws:sqs:us-east-1:123456789:my-dlq",

"maxReceiveCount": 3 # After 3 failed attempts, move to DLQ

}

}# Kafka dead letter handling - you build it yourself

from kafka import KafkaProducer, KafkaConsumer

dlq_producer = KafkaProducer(bootstrap_servers='kafka:9092')

def process_with_retry(consumer, max_retries=3):

for message in consumer:

retries = int(message.headers.get('retry_count', [b'0'])[0])

try:

process(message.value)

consumer.commit()

except Exception as e:

if retries >= max_retries:

# Send to dead letter topic

dlq_producer.send(

'orders.dlq',

key=message.key,

value=message.value,

headers=[

('original_topic', b'orders'),

('error', str(e).encode()),

('failed_at', str(time.time()).encode())

]

)

consumer.commit() # Move past the poison message

else:

# Publish back to main topic with incremented retry count

# (or use a separate retry topic with delay)

producer.send(

'orders.retry',

key=message.key,

value=message.value,

headers=[('retry_count', str(retries + 1).encode())]

)

consumer.commit()That's a lot of code for something SQS handles with a JSON configuration. If your primary use case is "process tasks, retry failures, alert on poison messages," SQS is dramatically simpler.

4. You want exactly-once processing without complexity.

Kafka supports exactly-once semantics (EOS), but enabling it requires enable.idempotence=true, transactional producers, isolation.level=read_committed on consumers, and careful application design. One misconfiguration and you're back to at-least-once with duplicates.

SQS FIFO provides exactly-once delivery (within the deduplication window) by default. Set a MessageDeduplicationId and SQS handles it.

5. Partitioning strategy gets it wrong.

Kafka's ordering and parallelism depend entirely on partitioning. Choose the wrong partition key and you get:

- Hot partitions: One partition receives 80% of traffic while others sit idle. Your "parallel" consumers are bottlenecked on a single partition.

- Ordering violations: Events that should be ordered end up on different partitions and processed out of sequence.

- Rebalancing pain: Adding partitions doesn't redistribute existing data. Historical events remain in old partitions.

# Bad partition key - creates hot partitions

# If 60% of orders are from "US", one partition gets 60% of traffic

producer.send('orders', key=b'US', value=order_data)

# Good partition key - distributes evenly

# Each order gets its own stream, load distributes across partitions

producer.send('orders', key=f'order-{order_id}'.encode(), value=order_data)

# Also good - user-level ordering with even distribution

producer.send('events', key=f'user-{user_id}'.encode(), value=event_data)Getting partitioning right requires understanding your data distribution upfront. With SQS, there's no partitioning decision to get wrong.

Real-World Use Cases - When to Reach for What

Use SQS When:

Background job processing. Sending emails, generating PDFs, processing uploads, resizing images. A user action triggers a task, a worker picks it up and processes it. The task is executed once and doesn't need to be replayed.

# Classic SQS use case: async email sending

def handle_user_signup(user):

# Save user to database (synchronous, fast)

db.create_user(user)

# Queue email sending (asynchronous, slow)

sqs.send_message(

QueueUrl=email_queue_url,

MessageBody=json.dumps({

"type": "welcome_email",

"user_id": user.id,

"email": user.email

})

)

return {"status": "created"} # Return immediately, email sends laterSimple service decoupling. Service A needs to tell Service B to do something, but doesn't need to wait for the result. SQS is the buffer.

AWS Lambda integration. SQS + Lambda is the simplest event-driven architecture on AWS. Lambda polls the queue, processes messages in batches, handles retries and DLQ automatically. Zero infrastructure to manage.

# SAM/CloudFormation - SQS triggering Lambda

Resources:

ProcessorFunction:

Type: AWS::Serverless::Function

Properties:

Handler: handler.process

Runtime: python3.12

Events:

SQSEvent:

Type: SQS

Properties:

Queue: !GetAtt TaskQueue.Arn

BatchSize: 10

MaximumBatchingWindowInSeconds: 5

TaskQueue:

Type: AWS::SQS::Queue

Properties:

VisibilityTimeout: 300

RedrivePolicy:

deadLetterTargetArn: !GetAtt DLQ.Arn

maxReceiveCount: 3

DLQ:

Type: AWS::SQS::QueueWorkloads where operational simplicity is the priority. Small teams, early-stage products, systems where the messaging layer should be invisible - not a project in itself.

Use Kafka When:

Event-driven architecture with multiple consumers. An "order placed" event triggers inventory updates, payment processing, email notifications, analytics tracking, and warehouse fulfillment. All five services consume the same event independently.

# Kafka event-driven architecture

# Single producer, multiple independent consumers

# Producer - writes once

producer.send('order-events', key=f'order-{order_id}'.encode(), value={

"event_type": "order_placed",

"order_id": order_id,

"user_id": user_id,

"items": items,

"total": total,

"timestamp": time.time()

})

# Consumer Group 1: Inventory Service

# Decrements stock for ordered items

# Consumer Group 2: Payment Service

# Initiates payment processing

# Consumer Group 3: Notification Service

# Sends order confirmation email

# Consumer Group 4: Analytics Pipeline

# Updates real-time dashboards

# Consumer Group 5: Warehouse Service

# Creates fulfillment order

# All five read the same event from the same topic.

# Each at their own pace. No fan-out infrastructure.Analytics and data pipelines. Clickstream data, user activity logs, application metrics - high-volume event streams that feed into data warehouses, real-time dashboards, or ML pipelines. Kafka's throughput and retention make it the natural fit.

Event sourcing and CQRS. If your architecture stores state as a sequence of events (rather than current state), Kafka is your event store. Events are immutable, ordered, and replayable - exactly what event sourcing needs.

Systems where replay is a requirement. Audit trails, compliance logging, debugging production issues. If "reprocess the last 24 hours of events" is a legitimate operational need, Kafka is the only option.

Cross-system data synchronization. Keeping data consistent across microservices. Service A owns user data and publishes change events to Kafka. Services B, C, and D maintain their own local views by consuming those events. This is Change Data Capture (CDC) - and Kafka with Debezium is the standard implementation.

The Hybrid Pattern - Using Both

This is the pattern most articles ignore, and it's what many production systems actually do.

Use Kafka for event streaming. Use SQS for task processing.

The events in Kafka represent "what happened." The messages in SQS represent "what needs to be done." These are different concerns, and mixing them in a single system creates unnecessary complexity.

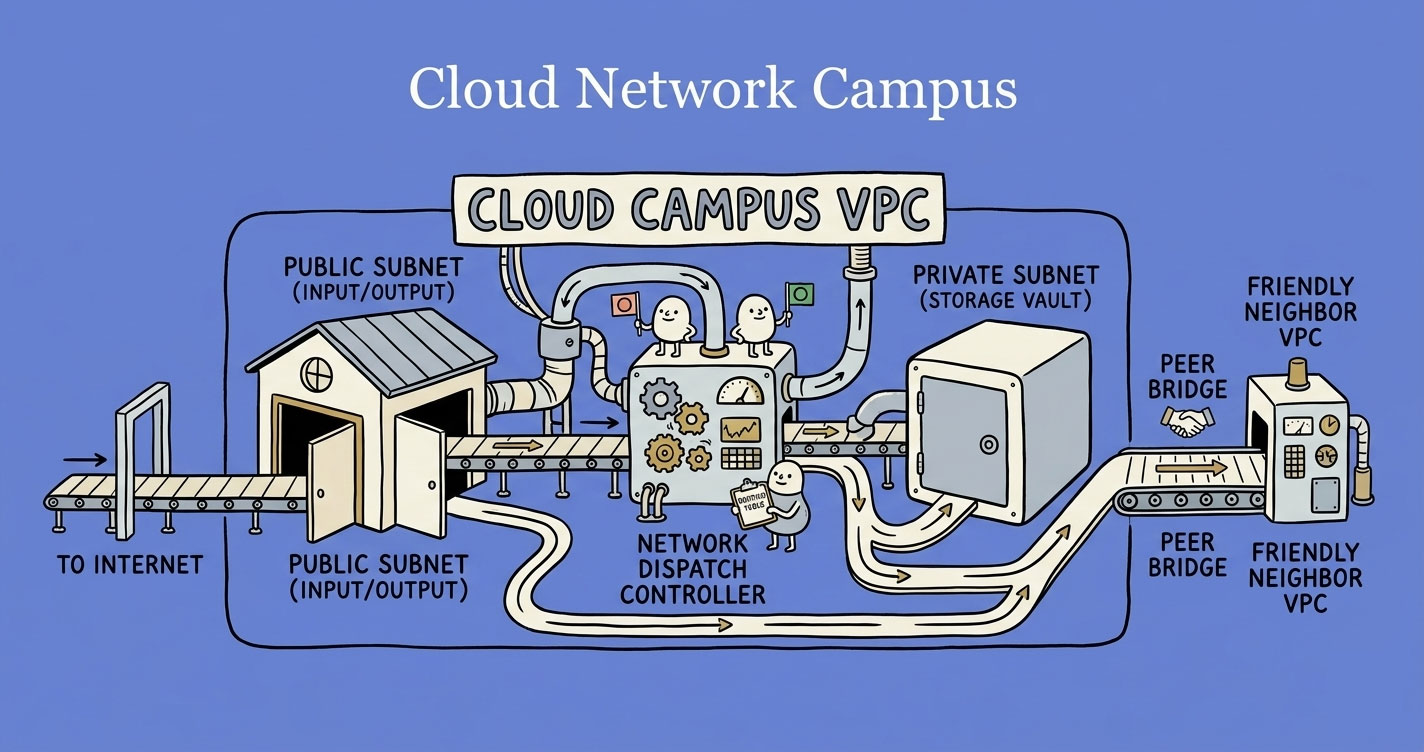

Hybrid architecture:

User places order

│

▼

┌─────────────────┐

│ Order Service │

│ (publishes │──────▶ Kafka Topic: "order-events"

│ event) │ (event stream - what happened)

└─────────────────┘ │

├──▶ Analytics Consumer (real-time dashboards)

├──▶ Search Indexer (update product search)

├──▶ Audit Service (compliance logging)

│

└──▶ Task Router Service

│

┌──────────────────┼───────────────────┐

▼ ▼ ▼

SQS: Email Queue SQS: Payment Queue SQS: Fulfillment Queue

│ │ │

▼ ▼ ▼

Email Worker Payment Worker Warehouse Worker

(send email) (charge card) (create shipment)Why this works:

- Kafka handles the streaming concerns: multiple consumers, replay, event history, real-time analytics.

- SQS handles the task concerns: retries, dead letter queues, visibility timeouts, simple worker processing.

- Each tool does what it's best at. No forcing Kafka to be a task queue (it's bad at it). No forcing SQS to be an event stream (it can't).

The Task Router Service is the bridge. It's a Kafka consumer that reads events and creates specific SQS messages for task workers. This separation means your task workers don't need to understand Kafka at all - they just pull from SQS, process, delete. Dead simple.

# Task Router Service - bridges Kafka events to SQS tasks

from kafka import KafkaConsumer

import boto3

import json

consumer = KafkaConsumer(

'order-events',

bootstrap_servers='kafka:9092',

group_id='task-router',

value_deserializer=lambda v: json.loads(v.decode())

)

sqs = boto3.client('sqs')

TASK_ROUTING = {

'order_placed': [

{'queue': 'email-queue-url', 'transform': make_email_task},

{'queue': 'payment-queue-url', 'transform': make_payment_task},

{'queue': 'fulfillment-queue-url', 'transform': make_fulfillment_task},

],

'order_cancelled': [

{'queue': 'email-queue-url', 'transform': make_cancellation_email_task},

{'queue': 'refund-queue-url', 'transform': make_refund_task},

],

}

for message in consumer:

event = message.value

event_type = event.get('event_type')

routes = TASK_ROUTING.get(event_type, [])

for route in routes:

task = route['transform'](event)

sqs.send_message(

QueueUrl=route['queue'],

MessageBody=json.dumps(task)

)

consumer.commit()This hybrid pattern is not overengineering. It's separation of concerns applied to messaging infrastructure. If your system has both "events that multiple services need to see" and "tasks that need reliable processing with retries," using both tools is the cleanest architecture.

AWS-Specific Considerations

Since many teams are building on AWS, here are the platform-specific trade-offs.

SQS + Lambda: The Simplest Pipeline

Lambda natively polls SQS queues, processes messages in batches, and handles failures automatically. No servers. No consumer code. No scaling configuration.

Strengths:

- Zero operational overhead. AWS manages everything.

- Automatic scaling. Lambda scales with queue depth.

- Built-in retry and DLQ support.

- Cost-effective for bursty, low-to-medium volume workloads.

Limitations:

- Lambda's 15-minute execution timeout caps how long a single message can take to process.

- Cold starts add latency to the first message in a burst.

- Concurrency limits (default 1,000 per region) can bottleneck high-throughput queues.

- Cost becomes significant at very high volumes (millions of invocations/day).

Kafka on AWS: MSK vs. Self-Managed vs. Confluent

Amazon MSK (Managed Streaming for Kafka): AWS manages the brokers, ZooKeeper/KRaft, patching, and backups. You still manage topics, partitions, consumer groups, and capacity planning. It's "managed Kafka" in the sense that you don't manage EC2 instances - not in the sense that it's as hands-off as SQS.

MSK Serverless: A newer option that removes broker management entirely. You pay per data throughput, not per broker. Closer to the SQS model operationally. But it's more expensive per GB than provisioned MSK and has limitations around partition count and throughput per partition.

Confluent Cloud: Fully managed Kafka with additional features (Schema Registry, ksqlDB, connectors). More opinionated and feature-rich than MSK, but vendor lock-in and higher cost.

Self-managed on EC2/EKS: Maximum control, minimum cost at high scale, maximum operational burden. Only worth it if you have dedicated infrastructure engineers and very specific performance requirements.

| Option | Operational Effort | Cost (low volume) | Cost (high volume) | Kafka Knowledge Needed |

|---|---|---|---|---|

| MSK Provisioned | Medium | High (fixed broker cost) | Moderate | Significant |

| MSK Serverless | Low-Medium | Moderate | High (per-throughput pricing) | Moderate |

| Confluent Cloud | Low | High | High | Moderate |

| Self-Managed | Very High | Low (just EC2 costs) | Low | Expert |

The Decision Framework

Stop debating features. Answer these questions:

1. Do multiple services need the same event?

- Yes → Kafka. Native multi-consumer support without fan-out infrastructure.

- No, it's point-to-point → SQS. One producer, one consumer, done.

2. Do you need to replay events?

- Yes → Kafka. Non-negotiable. SQS cannot replay.

- No → Either works. Move to next question.

3. What's your throughput?

- Under 10,000 messages/second → SQS handles it trivially.

- 10,000-100,000 → Either works, but evaluate future growth.

- Over 100,000 → Kafka. SQS FIFO can't keep up, and managing hundreds of Standard queues is painful.

4. Does your team have Kafka expertise?

- Yes → Kafka is a viable option regardless of scale.

- No → The learning curve is 2-3 months for production readiness. Factor that into your timeline. If the project ships in 4 weeks, use SQS.

5. Is operational simplicity the priority?

- Yes → SQS. No question.

- No, we have infra capacity → Evaluate Kafka based on the other answers.

6. Do you need both event streaming AND task processing?

- Yes → Hybrid. Kafka for events, SQS for tasks. Don't force one tool to do both jobs.

Decision tree (simplified):

Need event replay?

├── Yes → Kafka

└── No

└── Multiple consumers for same event?

├── Yes → Kafka

└── No

└── Throughput > 100K msg/sec?

├── Yes → Kafka

└── No

└── Team has Kafka expertise?

├── Yes → Either (Kafka gives future flexibility)

└── No → SQSCommon Mistakes to Avoid

1. Choosing Kafka because "we might need it later."

YAGNI applies to infrastructure too. Migrating from SQS to Kafka when you actually need it is a well-understood process. Starting with Kafka "just in case" costs you months of operational overhead for a capability you may never use.

2. Using Kafka as a task queue.

Kafka is an event log, not a task queue. It doesn't have visibility timeouts, dead letter queues, or per-message retry logic built in. If you're using Kafka like SQS - single consumer, delete after processing, retry on failure - you're using the wrong tool and building features that SQS provides for free.

3. Using SQS for event sourcing.

SQS deletes messages after consumption. Event sourcing requires an immutable, replayable log. These are fundamentally incompatible. Don't try to archive SQS messages to S3 and call it event sourcing - that's a fragile, complex approximation of what Kafka does natively.

4. Ignoring the cost model difference.

SQS is cheap at low volume and scales linearly with usage. Kafka has a high fixed cost (broker infrastructure) regardless of volume. At 100 messages/day, SQS costs fractions of a cent. Kafka costs hundreds of dollars. At 100 million messages/day, Kafka's per-message cost is much lower. Model the costs at your actual volume, not in the abstract.

5. Not monitoring consumer lag (Kafka).

Consumer lag - the gap between the latest produced message and the latest consumed message - is the single most important Kafka metric. Growing lag means your consumers can't keep up. Ignore it, and you'll wake up to a consumer that's hours behind, processing stale events.

# Check consumer lag - should be near zero in a healthy system

kafka-consumer-groups.sh \

--bootstrap-server kafka:9092 \

--group order-processor \

--describe

# Output:

# TOPIC PARTITION CURRENT-OFFSET LOG-END-OFFSET LAG

# orders 0 45230 45235 5

# orders 1 44891 44891 0

# orders 2 45102 46300 1198 ← Problem6. Not setting up a dead letter strategy for Kafka.

SQS has DLQ built-in. Kafka does not. If you don't build a dead letter topic and retry mechanism, a poison message will block the consumer forever (or get skipped silently, depending on your error handling). Both outcomes are bad.

Summary: What You Should Actually Do

Use SQS if you want simplicity. Background jobs, async task processing, service decoupling, Lambda integrations. If your messaging needs are "send a task, process it, move on," SQS is the right tool. Don't apologize for not using Kafka.

Use Kafka if you need replay, streaming, and multi-consumer patterns. Event-driven architectures, analytics pipelines, event sourcing, CDC, real-time data synchronization. If multiple services need the same events, or you need to reprocess historical events, Kafka is the right tool.

Use both if your system has both needs. Kafka for the event stream. SQS for task execution. This isn't overengineering - it's using each tool where it's strongest. Many production systems at scale run this exact pattern.

Regardless of which you choose:

- Model the cost at your actual volume. The cheapest option at 1,000 messages/day is not the cheapest at 100 million.

- Start with what your team knows. A well-operated SQS setup beats a poorly operated Kafka cluster every time.

- Plan for the migration path. If you start with SQS and later need Kafka, design your producers with clean event schemas from day one. The migration will be moving consumers, not redesigning events.

- Monitor relentlessly. Queue depth for SQS. Consumer lag for Kafka. These are the canaries in your messaging coal mine.

The best messaging system is the one your team can operate confidently at your current scale, with a clear path to what you'll need next.